This is the presentation I gave last week at eResearch Australasia in Brisbane. There's a page of links to the tools and references mentioned here. The slides were converted to blog form with Peter Sefton's pptx_to_md converter.

PDF version | Powerpoint Version

Hi - I’m Mike Lynch, and I work at the Sydney Informatics Hub. Our team provides support, training and expertise in statistics, data science, software engineering, bioinformatics and high performance computing – ranging from hour-long consultations to extended projects. Our bioinformatics team are participants in the Australian BioCommons, and we’re also providing data science and software engineering skills to the ATAP and LDaCa projects which are building text analytics platforms and the infrastucture for a linguistic data commons. My background is in software engineering, I’ve worked in research support from a lot of different angles – in a faculty, as part of a research office, and in specialised eResearch and infrastructure teams. I was also on the organising committee of the Research Software Engineering Asia Australia Unconference in September.

In this presentation I’m going to talk about how we talk about research software and the people who write it and support it. There will be a bit of technical stuff, but mostly I’m going to talk about feelings. One of the great things about working in research support, is the people I get to work with – these are really talented folks, whether they’re researchers themselves or specialists who collaborate researchers, building tools and platforms which enable us to do amazing things we couldn’t do before. But when they talked about their code, there’s this thing they do where they dismiss it – they call it “my janky code” or worse, as if it’s something that gets the job done, but is somehow lacking, or not quite the real deal. The working title for this talk was was "abject-oriented software”, which I thought was a joke that was maybe too niche, but couldn’t let go of.

Another thing you hear – and this might be a problem for building a community around the idea of RSEs, is "I'm not a software engineer". Now, there are a bunch of things which they're implying by this, apart from what their job title is. Like me, they might not have formal qualifications in software engineering (I did Arts/Law : I’ve worked with people I call “engineer engineers”, who made helicopters fly, so don’t feel like an engineer compared to that). Or they have a research career, and that's their professional identity. Or they're a data scientist, or bioinformaticion, or statistician. They might also be trying to avoid some of the negative associations which software engineering has: They can do things without the bureacratic overhead of having to raise a ticket, or negotiating through all the hurdles of enterprise IT.

Now this is the sort of gatekeeping which the IT industry has been doing to itself for years. The title of this talk is a reference to a book from the 80s called "Real Men Don't Eat Quiche" - that book was an ironic parody, it wasn't a literal piece of toxic masculinity, but it was an idea that struck a kind of nerve, and people would make jokes about “real programmers”, the sort of jokes which aren’t really jokes. Real programmers write in C, they don't use scripting languages. They do their own memory management. Moving forward to the 90s, they don't do web development. They don't use GUIS. They don't code in some fancy IDE, they use vim. This is juvenile stuff, but unfortunately, it's still going on within the tech community. It can also happen within the research support community, especially when people from the IT side of the house are talking about the languages and platforms which research software uses. I'll come back to that point later.

The best expression I've seen of the highs and lows of programming is the Sorcerer’s Apprentice segment from the Disney film Fantasia. At first you think, I’m a genius! I’ve made my broomstick carry water for me! This is wonderful!

And then you have a bit of a nap, and when you wake up there are hundreds of broomsticks flooding your castle and you’re desperately trying to figure out what went wrong. At least he’s reading the manual.

And then Dad comes home and has to clean up the mess. The sorcerer is the myth of the ideal programmer: they write flawless code the first time, they never make mistakes, they don't blow things up.

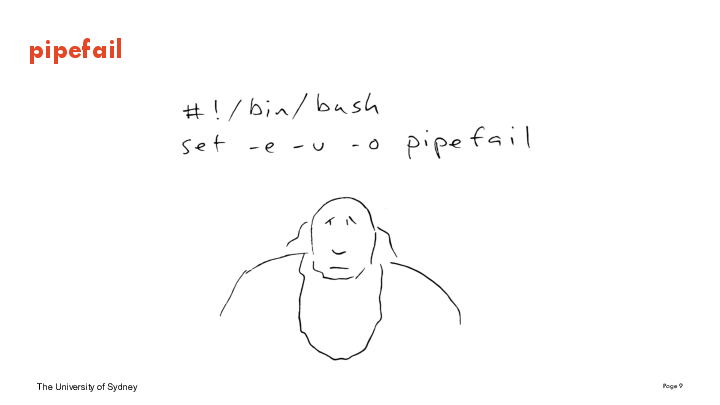

And this is what happened to some critical internet infrastructure late last year because of a missing command-line flag in a shell script. Not to go into too much detail, but in shell scripts you can chain programs together to make a pipeline - the first program does something, then passes the results to the next, and so on - and the default behaviour if one of the programs fails is to just carry on regardless. You can set an option called ‘pipefail’ which will make the script do the sensible thing, which is to stop the pipeline when one of the parts fails. One of the major content distribution networks deployed a one-line shell script which didn’t have that option set, one of the pieces broke, one thing led to another and large parts of the internet were painfully slow until they fixed it. (The fact that they were transparent about this was kind of admirable.) The ideal programmer, the sorcerer who never makes mistakes, is imaginary.

When people say “I’m not a software engineer” they are making a good point – we can’t be everything. Being a researcher is enough of a career, without feeling that you need to have a whole other profession on top of it. And some parts of software engineering do require specialised, detailed knowledge of how computers and programming languages work, but what I want to talk about now are simple tools and techniques which are part of the practice of software engineering. These aren’t very abstract or esoteric things; they are guardrails – things which reduce the impact of when you are wrong. Tools which allow us to check for some bugs automatically. Tools which allow us to do the same thing twice - I'm not talking about reproducibility in the scholarly sense, but just, can I get this to build? Can I get it to work when I get a new laptop? Tools which allow us to work on our code and break it, but make it easy for us to take it back to the state it was when it was working. There's also a cultural goal behind thinking of software engineering as a set of tools to allow fallibility and safe mistakes: a culture which helps us collaborate, and avoids the intimidating ideal of the superhuman programmer.

Managing separate Python environments is not a new idea, and one of the problems in this space is that there have been a lot of different ways to manage the dependencies of a Python project, but platforms like Conda, which also provide development environments and libraries of pre-compiled packages, are more or less standard practice now. They can't, unfortunately, avoid having to untangle dependency problems, but they can at least stop your dependencies interfering with one another. renv provides R coders with the same ability to snapshot a project’s dependencies, capture them, and then replay them

Another kind of guardrail is static analysis: tools which check your code without running it. Formatters like black for Python or styler for R will enforce a particular style on your code – which frees you from having to worry or argue about it. Linters, like flake8 or lintr, check for certain classes of error, such as libraries which are imported but not used, variables which are used before you’ve assigned anything to them. These are small things and often wouldn’t matter, and cleaning them up can seem like a fuss, but once you’re used to tools like this, you miss them when they’re gone. Most modern programming environments have integration for these tools, and you can also make them part of your source control workflow, so they are run automatically when you commit a change: pre-commit is a useful utility which can run static checkers for multiple languages in the same project.

git is perhaps the best example of a software system which the rest of the world thinks you have to be a wizard to be able to use, and which wizards themselves can't use. It's very useful, it's become the industry standard, it's a basic requirement for collaborating on most open-source projects, and all of us have gotten into a horrible mess with it. Talking in detail about this is a bit beyond the scope of this talk, but I’ve got a link to an article called “What’s Wrong with Git: a Conceptual Design Analysis” if you’re interested. Git is the classic case of a tool which its users have invested so much time and emotion into learning that they can be either defensive, or actively hostile, to people who haven’t made the same investment. The good news about git is that it's increasingly integrated into development environments. Most IDEs and platforms like RStudio support git out of the box. And there are plenty of GUI clients which provide an easier way to use it. And these options are not for novices – they’re for users at all levels.

We associate software reliability engineering and devops with enterprise systems and web-scale platforms, when many of the tools we work on have much smaller user bases. But one of the good things about devops is that the tools sort of scale - from a developer's laptop to a cloud deployment. A very common problem in the research domain is an app which might be on an old technology stack but which still has an active user base and needs to be kept alive: but from an IT department's point of view is a liability. Docker provides a way to wrap these up and make them easier to test and maintain. This is work which requires some investment, but it can be the difference between 'that server or VM in the corner who no-one knows how to fix' and something you can spin up on your laptop to debug. And Docker can be used to deliver applications to users. An app which used to need a server in the 90s might now be better distributed as a local web app in a docker container. Bioinformatics is making use of pipelining technologies like Airflow and Nextflow – some of these are native to the research space, but others were developed in industry. There’s a lot of opportunity here to take tooling developed for one domain and use it to run other sorts of computational pipelines, such as machine learning.

I want to finish by returning to something important than tools, which is culture. Why do programmers so often use the language of disgust to talk about code, especially their own? I’m a bit evangelical about Felienne Herman’s book The Programmer’s Brain, which takes a cognitive approach to how we read and write code. She starts the book by talking about confusion. Confusion is an essential part of programming. When we're reading a codebase - someone else’s, or our own from a few years or months ago - we get confused, which is an uncomfortable place to be. Reading someone else's code is a peculiar form of psychological intimacy – you need to form a mental model of how this code works, and if that mental model isn't like the ones you are used to forming, this is also uncomfortable. So programmers often use visceral language to talk about these feelings. It's also common for IT professionals to talk like this about research tools and languages which they're not familiar with. People whose experience has been with mainstream programming paradigms often have this reaction to R, despite R being a really important and useful tool for data science which comes from a different family of programming languages. This is not professional, and it’s another form of gatekeeping: that some ways of using a computer aren’t real programming

All of this is why code review can be so intimidating. As a practice it's probably one of the best things you can do to improve your work, and platforms like GitHub provide really approachable support for it, but it's intimidating. It makes you vulnerable, in a cultural space where we’ve spent decades using gatekeeping, and the sorts of visceral language I've just talked about, to dismiss languages or techniques we don't like or don’t fully understand. Code review is also hard when you're in a small team, and it's hard to budget time for. One way to make it easier is to frame it as collaboration, rather than an exercise in criticism, and to lead with the parts you want help with. And this is a situation where it’s on the more experienced members of a team to create a comfortable space for those without a software engineering background.

I’ve already mentioned the Research Software Engineering Unconference in September: this bought together researchers who code and IT professionals in a way which was really inspiring. A lot of coders working in this space are somewhat isolated, and we are hoping to keep the community-building momentum going. One of the keynotes mentioned that the NumPy community have a newcomers hour to make it easier for people who aren't software engineers or "real programmers” to contribute to the codebase - this is a fantastic idea for open source communities.

I’ve collected links to the tools I’ve mentioned here or you can use the QR code. If the idea of helping researchers improve their digital skills appeals to you, we’re currently recruiting a Data Science Trainer for a fixed term role – there’s a link on the page I’ve given there. Thanks for your time.